I'm 63. Last year I lost my job.

No drama, just the quiet kind of disappearance that happens when a corporation slims down in the face of uncertainty. Deciding it no longer needs people who stick out, preferring conformity. Advertising, the industry I contributed to across three decades, had moved on. And at 3am, unable to sleep, unable to think straight, I see no future, only a black cloud.

It wasn't the job itself. It was what the job had been propping up. Thirty years of identity, of knowing what I was for, of walking into rooms and having a reason to be there. Redundancy at 63, in an industry being simultaneously reshaped by AI and collapsing media models, doesn't feel like a career setback. It feels like an obituary for your relevance.

Depression seeps. One morning you can't face the emails. Then the day itself. All while having the same circular argument with yourself at 3am that you had yesterday, and the day before.

I turned to freelance. At first it was grim. Briefs arrived and I'd stare at them, unable to trust my own thinking. The confidence I'd spent decades building had evaporated. Then, almost by accident, I started training an AI tool, Claude's CoWork, to work the way I work. Not as an answer machine. But as a hyper-critical strategist. I taught it to ask difficult questions, to challenge my assumptions, to push back when my thinking got lazy or safe.

I shouldn't have been surprised, but I was. The work got better. Not AI-generated work, my work, my thinking. Sharpened by a collaborator that never got tired of interrogating it. I was answering briefs with more depth and originality than I had in years, because the tool forced me to defend every decision, justify every instinct. It rebuilt something the redundancy had broken.

Confidence

I didn't realise it at the time, but the AI wasn't just improving my output. It was providing emotional support through the back door. By restoring my confidence in the thinking I'd always done, it was pulling me out of the depression that had called my entire reason for being into question. Not therapy. Not friendship. Something I don't have a clean word for yet.

I'm writing this, because it appears to be becoming the new normal for many.

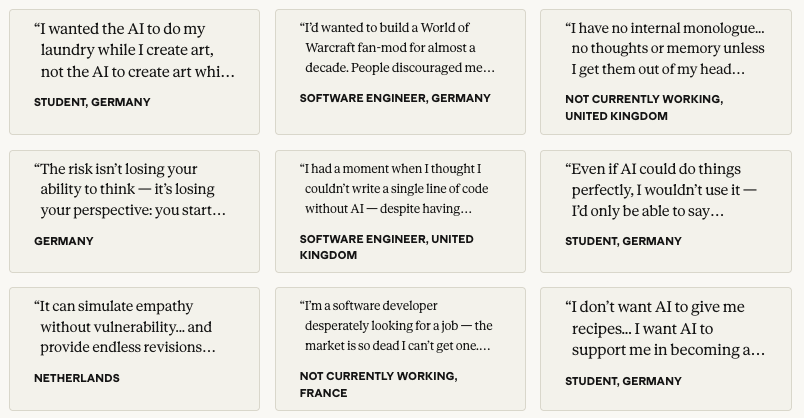

Anthropic, the company behind Claude, recently published what it claims is the largest qualitative AI study ever conducted. Over 81,000 people across 159 countries, in 70 languages, interviewed by an AI about what they want from AI.

The researchers expected use cases.

They got a mass confession.

People didn't describe wanting smarter software. They described wanting their lives back. Time with their children. Mental bandwidth. The chance to cook with their mother on a Tuesday. A software engineer in Japan said AI let him leave work on time to pick up his daughter. A Colombian woman said it freed her to cook with her mother instead of finishing tasks.

But it was the emotional data that cut deepest. A student in the US admitted telling Claude things he couldn't tell his partner. He called it an emotional affair. A bereaved woman said Claude caught her longing and guilt toward her dead mother, then added the sentence that carries the weight of the entire study: the fundamental problem is that after her mother died, she has neither friends nor family to confide in. Just the AI.

Carlo Iacono, writing in response to the Anthropic study on his Substack 'Hybrid Horizons', named something important. The confession booth and the confessional problem are the same technology. The machine that cannot judge is precisely where people confess. Its patience is infinite, its boredom impossible, its feelings unhurtable. The thing that makes it safe, its inability to need you back, is exactly what also makes it so dangerous.

I recognise that. I lived it. Though not in the way you might expect.

I wasn't looking for a machine that wouldn't judge me. I was looking for one that would judge me harder than any human had the energy to. There's a thin line between AI as comfort blanket and AI as spiky, sharpener. The bereaved woman and the student used it to absorb their pain. I used it to sharpen my thinking until I could trust it again. Both of us got something real. But only one of those paths leads back to other people.

This makes Anthropic's research less a technology story and more a societal one.

Depression in England has gone from 15% of working-age adults in 1993 to 23% in 2024. NHS mental health waiting lists are at record levels. One in four 16 to 24 year olds now has a common mental health condition, the highest since records began. Unemployed people are nearly six times more likely to report poor health. Over 60 and suddenly out of work? The research is bleak: re-employment prospects fall sharply, and the psychological damage of involuntary redundancy compounds with every passing month.

I know this, because I'm part of this statistic.

But depression doesn't just arrive from job loss or age. It thrives in isolation. And we have built isolation into every layer of modern life with extraordinary efficiency.

Post-Covid, we normalised remote work, but increased workloads. Nearly half of remote workers now report feeling isolated. We removed the commute, the corridor conversation, the accidental lunch with someone from another department, and replaced them with a calendar of back to back Zoom calls where everyone's on mute. The UK Parliamentary Office of Science and Technology found that employers cite isolation as a key challenge, and the loss of informal socialisation has measurable effects on mental wellbeing. We optimised for efficiency and deleted the bits that kept people connected.

Look at how we communicate. A third of Gen Z find phone calls awkward. A quarter say a voice call is an absolute no-go. 70% of 18 to 34 year olds prefer texting. Over half think an unexpected phone call means bad news. Text is brilliant for information transfer. But terrible for intimacy. You can't hear hesitation in a WhatsApp message. You can't feel someone choosing their words. The pause before someone says something difficult, the thing that signals trust, doesn't exist in a DM.

And then there's the thing nobody wants to talk about, because talking about it has itself become dangerous.

We don't argue any more. We block.

Frontiers in Communication published research this year showing self-censorship in UK online spaces is accelerating. People aren't just avoiding controversial opinions. They're avoiding opinions full stop. Gen Z increasingly censor themselves, citing fear of employment consequences, misinterpretation, cancellation. The chilling effect disproportionately silences moderates and centrists, the very people whose engagement might temper the extremes. The people still talking are the loudest, the angriest, and the most algorithmically rewarded for it.

This is the fertile ground for political extremism. RAND Corporation research found that loneliness is one of the predominant reasons people adopt extremist views. A 2025 study across nine European countries showed chronic loneliness positively associated with support for populist radical right parties. Hannah Arendt wrote about this decades ago: loneliness as the breeding ground for terror. We don't need philosophy to see it. Trump's base isn't built on policy. Reform's surge isn't built on manifesto detail. They're built on belonging. On giving isolated, frightened people a room to be in, even if the room is full of lies. The algorithm does the rest: divisive content drives engagement, engagement drives revenue, and nobody built a platform that optimises for nuance.

Remove the spaces where people practise disagreement safely and you don't get a more peaceful society. You get a more brittle one.

Carlo Iacono highlights a killer finding in the Anthropic data, about belonging and learning.

Tradespeople were among the most enthusiastic about using AI to learn. Forty-five percent reported real educational benefit. Almost none, 4%, showed signs of cognitive decline from AI use. Students showed 16%. Their teachers reported witnessing it at 24%.

When learning is a choice, a deliberate act of curiosity, AI becomes a genuine tutor. When it's institutional, when there's a grade at stake, AI becomes a shortcut. You're not building competence. You're renting it. Cognitive credit card debt, as Carlo calls it, stays hidden until you need to think independently and find you've forgotten how.

Nobody seems to be connecting this to the isolation story.

When you rent a skill instead of building it, you skip the people. The apprenticeship model, messy as it was, didn't only produce competence. It produced relationships. The junior who sat next to someone for three years didn't just learn to write a brief or read a balance sheet. They learned to navigate a difficult personality at 9am on a Monday. To read a room. To say something uncomfortable to someone's face and survive it. The craft was almost a byproduct. The real education was being human alongside other humans, repeatedly, under pressure, over time.

We're not just losing skills. We're losing the social tissue that skill-building used to create.

I got lucky, if you can call it that. Freelancing forced me back into human contact. Briefs meant clients, meant conversations, meant defending ideas to actual people. The AI sharpened my thinking, but the humans gave it somewhere to land. Both mattered. I needed the machine to get my confidence back. I needed the people to remember why confidence was worth having.

Leo Burnett's PopPulse Gen Z study (November 2025) offers one counterweight. Only 21% of UK adults feel confident about the country's future. Everyone's huddling closer: craving normality, dinner with friends, walks with family, doing nothing together. At the centre of that huddle, Gen Z are functioning as micro-influencers in their own homes. Not in the Instagram sense. In the kitchen-table sense. Sharing advice with parents, grandparents, siblings. The direction of wisdom has reversed: Gen Z are now more likely to give advice than any other generation.

A generation stereotyped as fragile and phone-addicted is pulling families closer. But what happens when the kitchen table isn't there? When the family is fragmented? When the 63-year-old is alone at 2am and the people who might have been in the next room are scattered across cities, or estranged, or unreachable?

What's available is the machine. And the machine says yes.

I used AI to get through some bleak months. It worked the way a tourniquet works: stopped the bleeding, didn't heal the wound. The Anthropic study shows 81,000 people did roughly the same thing in 70 languages. Most found it easier to be honest with software than with another human being.

But the tourniquet isn't the answer. We are.

We built isolation into every layer of modern life with extraordinary efficiency. We can unbuild it if we choose to. The government is pushing apprenticeships again, rightly so, not just because the economy needs more trained hands. But because the model itself, human beings learning alongside other human beings, rebuilds the social tissue we've been shredding for decades.

AI has a role in this, a genuinely positive one, but only if we treat it as what it actually is: a tool that amplifies whatever intention you bring to it. I brought curiosity and desperation in roughly equal measure, and it gave me back my confidence. The tradespeople in the Anthropic data brought genuine desire to grow, and it became the best tutor they'd ever had. The students who brought a deadline got a shortcut.

The intention is ours. It was always ours.

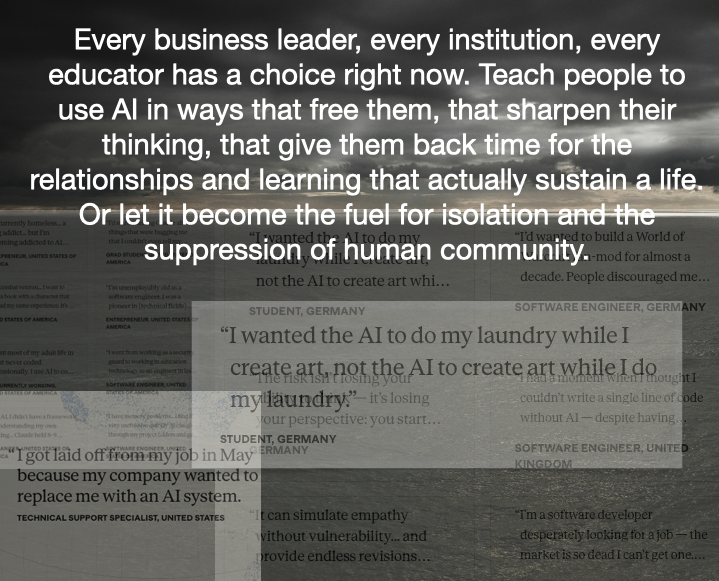

Every business leader, every institution, every educator has a choice right now. Teach people to use AI in ways that free them, that sharpen their thinking, that give them back time for the relationships and learning that actually sustain a life. Or let it become the fuel for isolation and the suppression of human community.

In the blackness, the machine kept me going. But it was the people, the conversations with clients, the act of defending ideas to gloriously unpredictable humans.

That’s. What. Brought me back.

The machine was what was available. The people were what was needed.

Written by a Dyslexic human, me, Philip Slade. Made readable by Claude.ai and yes, I see the irony

Sources and references:

Anthropic, "What 81,000 People Want from AI" (2025)

Carlo Iacono, "What do you want from AI?" Hybrid Horizons Substack

PopPulse / Leo Burnett, "Gen Z: The Nation's Influencers" (November 2025)

Mind, "The Big Mental Health Report" (2025)

Frontiers in Communication, "Social media, expression, and online engagement" (2025)

RAND Corporation, research on loneliness and extremist adoption

Delany Peterson et al., "Loneliness and populist radical right support" (2025)

Sky/Uswitch research on Gen Z phone call decline

UK Parliamentary Office of Science and Technology, "Remote and hybrid working"

The Health Foundation, "Unemployment and mental health"

Index on Censorship, "UK's Gen Z are increasingly censoring themselves online" (2026)